# YOLOv8测试(1)

创始人

2024-06-01 20:33:45

0次

YOLOv8测试(1)

- 1. 训练最简流程

- 1.1 安装包

- 1.2 训练demo

- 1.3 验证

- 参考文献资料

鉴于网络上的太多教程,都太过繁琐了。其实之前也用过YOLOv2 v3版本,但很久没用,找了一圈教程多少有坑,想想还是自己整理一版吧。花了几天时间仔细看了看,发现还是官网的教程靠谱并且简洁,整理了一个最简快速流程,帮助想要使用目标检测算法的爱好者快速上手项目。

1. 训练最简流程

对于多数用户来说,都是面向边缘端的项目,实际上,日常监测任务也不要求特别高的训练精度,为了能够快速训练,这里选择Github中提到的YOLOv8n模型。

1.1 安装包

pip install ultralytics

1.2 训练demo

官方给的demo似乎对于Pycharm用户会有小报错,使用那个网页版的jupyter notebook似乎不会,应该就是线程的问题

- 官方代码

from ultralytics import YOLO# Load a model

model = YOLO("yolov8n.yaml") # build a new model from scratch

model = YOLO("yolov8n.pt") # load a pretrained model (recommended for training)# Use the model

results = model.train(data="coco128.yaml", epochs=3) # train the model

- 修改的,增加了main函数就行

from ultralytics import YOLO# Load a model

def main():model = YOLO("yolov8n.yaml") # build a new model from scratchmodel = YOLO("yolov8n.pt") # load a pretrained model (recommended for training)# model.train(data="coco128.yaml", epochs=5)if __name__ == '__main__':main()

1.3 验证

- 完整代码

from ultralytics import YOLO# Load a model

def main():model = YOLO("yolov8n.yaml") # build a new model from scratchmodel = YOLO("yolov8n.pt") # load a pretrained model (recommended for training)# model.train(data="coco128.yaml", epochs=5)results = model.val() # evaluate model performance on the validation setif __name__ == '__main__':main()

- 日志

Image sizes 640 train, 640 val

Using 8 dataloader workers

Logging results to runs\detect\train9

Starting training for 5 epochs...Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size1/5 5.85G 1.213 1.429 1.258 215 640: 100%|██████████| 8/8 [00:05<00:00, 1.58it/s]Class Images Instances Box(P R mAP50 mAP50-95): 100%|██████████| 4/4 [00:36<00:00, 9.12s/it]all 128 929 0.668 0.54 0.624 0.461Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size2/5 6.87G 1.156 1.327 1.243 163 640: 100%|██████████| 8/8 [00:04<00:00, 1.64it/s]Class Images Instances Box(P R mAP50 mAP50-95): 100%|██████████| 4/4 [00:35<00:00, 8.91s/it]all 128 929 0.667 0.589 0.651 0.487Epoch GPU_mem box_loss cls_loss dfl_loss Instances Size...

- 预测结果

返回的信息也挺全面的,包括Boxes 原始图像 尺寸之类的

results:[{ '_keys': . at 0x0000023FA2FC0350>,'boxes': ultralytics.yolo.engine.results.Boxes

type: torch.Tensor

shape: torch.Size([6, 6])

dtype: torch.float32

tensor([[2.40000e+01, 2.26000e+02, 8.02000e+02, 7.58000e+02, 8.75480e-01, 5.00000e+00],[4.80000e+01, 3.97000e+02, 2.46000e+02, 9.06000e+02, 8.74487e-01, 0.00000e+00],[6.70000e+02, 3.79000e+02, 8.10000e+02, 8.77000e+02, 8.53311e-01, 0.00000e+00],[2.19000e+02, 4.06000e+02, 3.44000e+02, 8.59000e+02, 8.16101e-01, 0.00000e+00],[0.00000e+00, 2.54000e+02, 3.20000e+01, 3.25000e+02, 4.91605e-01, 1.10000e+01],[0.00000e+00, 5.50000e+02, 6.40000e+01, 8.76000e+02, 3.76493e-01, 0.00000e+00]], device='cuda:0'),'masks': None,'names': { 0: 'person',1: 'bicycle',...79: 'toothbrush'},'orig_img': array([[[122, 148, 172],[120, 146, 170],[125, 153, 177],...,...,[ 99, 89, 95],[ 96, 86, 92],[102, 92, 98]]], dtype=uint8),'orig_shape': (1080, 810),'path': '..\\bus.jpg','probs': None,'speed': {'inference': 30.916452407836914, 'postprocess': 2.992391586303711, 'preprocess': 3.988981246948242}}]

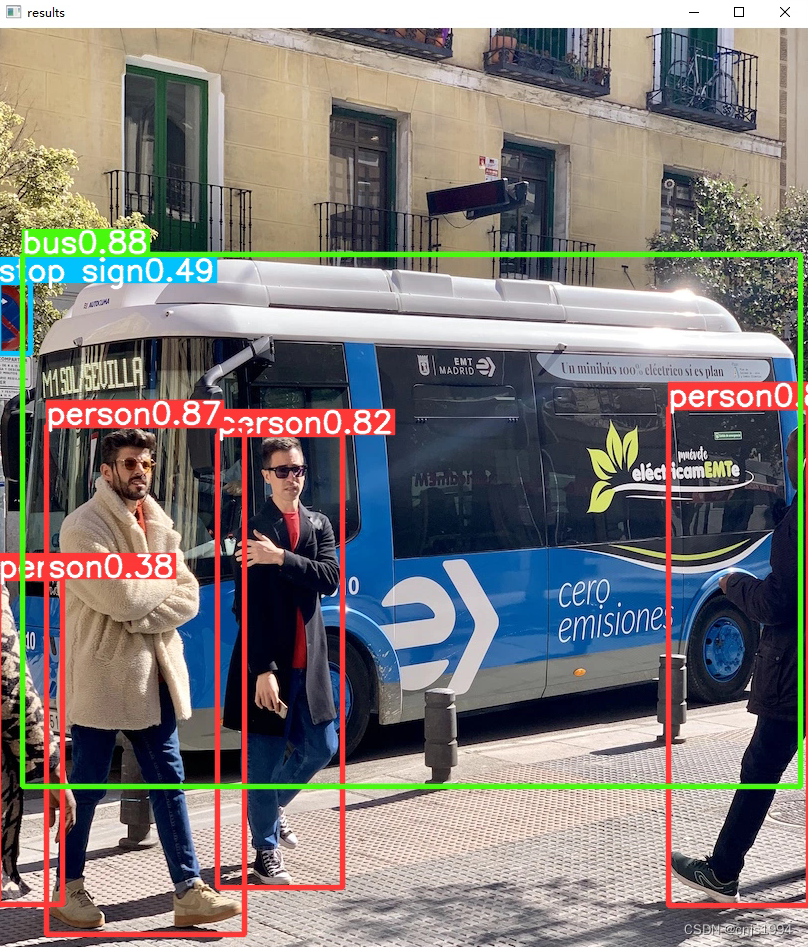

- 可视化结果

小结:

- 避免多线程导致的程序报错

错误:This probably means that you are not using fork to start your

child processes and you have forgotten to use the proper idiom

in the main module:

if __name__ == '__main__':freeze_support()...The "freeze_support()" line can be omitted if the programis not going to be frozen to produce an executable.

- 训练注意关闭KX上网工具,否则模型文件可能下载失败

错误:requests.exceptions.ProxyError: HTTPSConnectionPool(host=‘pypi.org’, port=443): Max retries exceeded with url: /pypi/ultralytics/json (Caused by ProxyError(‘Cannot connect to proxy.’, FileNotFoundError(2, ‘No such file or directory’))) - opencv包报错:具体可参考这篇【2】

The function is not implemented. Rebuild the library with Windows, GTK+ 2.x or Cocoa support.

参考文献资料

【1】YOLOv8官方Github

【2】https://stackoverflow.com/questions/74035760/opencv-waitkey-throws-assertion-rebuild-the-library-with-windows-gtk-2-x-or

上一篇:three.js和WEBGL的关系 优劣对比 哪个更流行 在中国更好就业

下一篇:计算机视觉与深度学习 | Visual ChatGPT:微软开源视觉(图文)聊天系统——图像生成、迁移学习、边缘检测、颜色渲染等多功能(附代码下载链接)

相关内容

热门资讯

电视安卓系统哪个品牌好,哪家品...

你有没有想过,家里的电视是不是该升级换代了呢?现在市面上电视品牌琳琅满目,各种操作系统也是让人眼花缭...

安卓会员管理系统怎么用,提升服...

你有没有想过,手机里那些你爱不释手的APP,背后其实有个强大的会员管理系统在默默支持呢?没错,就是那...

安卓系统软件使用技巧,解锁软件...

你有没有发现,用安卓手机的时候,总有一些小技巧能让你玩得更溜?别小看了这些小细节,它们可是能让你的手...

安卓系统提示音替换

你知道吗?手机里那个时不时响起的提示音,有时候真的能让人心情大好,有时候又让人抓狂不已。今天,就让我...

安卓开机不了系统更新

手机突然开不了机,系统更新还卡在那里,这可真是让人头疼的问题啊!你是不是也遇到了这种情况?别急,今天...

安卓系统中微信视频,安卓系统下...

你有没有发现,现在用手机聊天,视频通话简直成了标配!尤其是咱们安卓系统的小伙伴们,微信视频功能更是用...

安卓系统是服务器,服务器端的智...

你知道吗?在科技的世界里,安卓系统可是个超级明星呢!它不仅仅是个手机操作系统,竟然还能成为服务器的得...

pc电脑安卓系统下载软件,轻松...

你有没有想过,你的PC电脑上安装了安卓系统,是不是瞬间觉得世界都大不一样了呢?没错,就是那种“一机在...

电影院购票系统安卓,便捷观影新...

你有没有想过,在繁忙的生活中,一部好电影就像是一剂强心针,能瞬间让你放松心情?而我今天要和你分享的,...

安卓系统可以写程序?

你有没有想过,安卓系统竟然也能写程序呢?没错,你没听错!这个我们日常使用的智能手机操作系统,竟然有着...

安卓系统架构书籍推荐,权威书籍...

你有没有想过,想要深入了解安卓系统架构,却不知道从何下手?别急,今天我就要给你推荐几本超级实用的书籍...

安卓系统看到的炸弹,技术解析与...

安卓系统看到的炸弹——揭秘手机中的隐形威胁在数字化时代,智能手机已经成为我们生活中不可或缺的一部分。...

鸿蒙系统有安卓文件,畅享多平台...

你知道吗?最近在科技圈里,有个大新闻可是闹得沸沸扬扬的,那就是鸿蒙系统竟然有了安卓文件!是不是觉得有...

宝马安卓车机系统切换,驾驭未来...

你有没有发现,现在的汽车越来越智能了?尤其是那些豪华品牌,比如宝马,它们的内饰里那个大屏幕,简直就像...

p30退回安卓系统

你有没有听说最近P30的用户们都在忙活一件大事?没错,就是他们的手机要退回安卓系统啦!这可不是一个简...

oppoa57安卓原生系统,原...

你有没有发现,最近OPPO A57这款手机在安卓原生系统上的表现真是让人眼前一亮呢?今天,就让我带你...

安卓系统输入法联想,安卓系统输...

你有没有发现,手机上的输入法真的是个神奇的小助手呢?尤其是安卓系统的输入法,简直就是智能生活的点睛之...

怎么进入安卓刷机系统,安卓刷机...

亲爱的手机控们,你是否曾对安卓手机的刷机系统充满好奇?想要解锁手机潜能,体验全新的系统魅力?别急,今...

安卓系统程序有病毒

你知道吗?在这个数字化时代,手机已经成了我们生活中不可或缺的好伙伴。但是,你知道吗?即使是安卓系统,...

奥迪中控安卓系统下载,畅享智能...

你有没有发现,现在汽车的中控系统越来越智能了?尤其是奥迪这种豪华品牌,他们的中控系统简直就是科技与艺...